The architecture of computing is no longer just evolving, it is fragmenting into competing paradigms that must somehow work as one. What emerges next will define not only performance, but whether AI itself can scale beyond its current constraints.

The language of progress in computing has always been deceptively simple. Faster processors, denser chips, more efficient systems. For decades, the industry advanced along a familiar path, pushing silicon to its limits while software adapted to extract incremental gains. That trajectory is now breaking down, not gradually, but decisively.

Professor Dr. Martin Schulz, Chair of Computer Architecture and Parallel Systems at the TUM School of Computation, Information and Technology, does not frame this as a continuation of the past. He describes it as a rupture. As the opening keynote speaker at ISC High Performance, he will argue that the industry is entering what may be the most significant architectural shift in the history of supercomputing.

The implications extend far beyond high performance computing. They reach into the foundations of AI, enterprise infrastructure, and the limits of what digital systems can realistically achieve.

“The way we used to build systems was based on making the same components better, increasing frequency, adding capacity, refining architectures,” Schulz says. “That approach has run into fundamental limits. We are now seeing a shift where simply scaling what we already have is no longer sufficient, and the industry is being forced to explore entirely new directions.”

The turning point has been building for some time. GPUs extended the life of traditional computing by specialising workloads and enabling parallelism at scale, but even that model is now under pressure. Energy consumption, supply constraints, and diminishing returns are forcing a broader rethink.

“GPUs have taken us a long way, but they can only take us so far,” Schulz continues. “We are running into energy constraints, we are running into availability issues, and the complexity of systems continues to increase. What we are seeing now is a level of experimentation across the industry that we have not seen before, where entirely new technologies are being explored to overcome these barriers.”

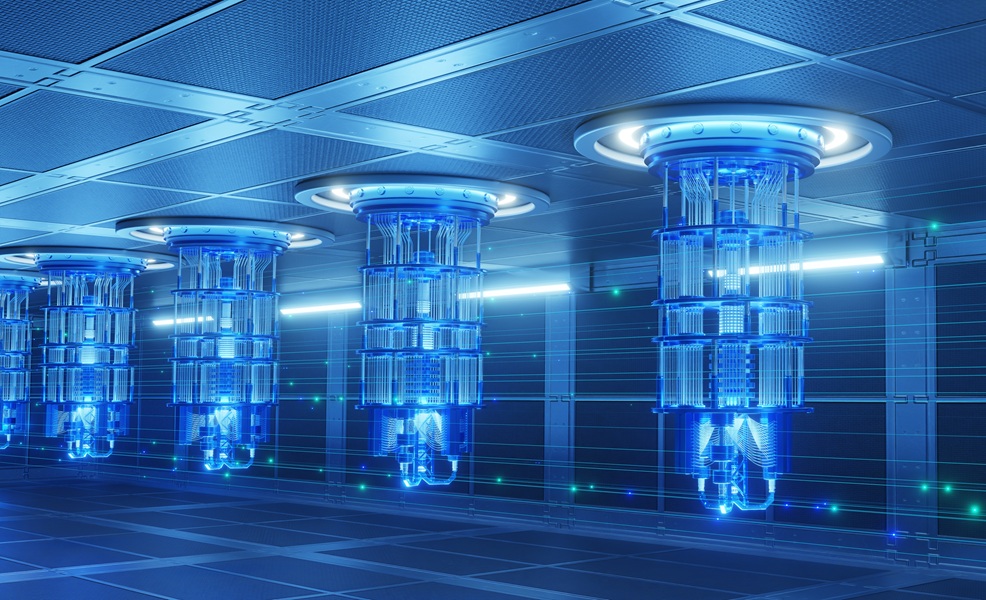

What replaces the old model is not a single successor architecture, but a growing constellation of them. Quantum computing, neuromorphic systems, photonics, and even more speculative approaches are all competing for relevance. None are mature enough to dominate. None are broad enough to replace what came before. Yet each offer something the others cannot.

“Quantum computing is fundamentally different, it uses quantum mechanical principles to solve a very specific class of problems very efficiently,” Schulz says. “Neuromorphic computing tries to emulate aspects of how the brain works, using synaptic connections and entirely different computational models. These are not incremental improvements. They represent different ways of thinking about computation itself.”

The consequence is a shift from a unified architecture to a fragmented one, where multiple technologies must coexist. That coexistence is not optional. It is the only viable path forward. “None of these technologies will replace the systems we have today,” Schulz says. “Each of them is highly specialised. What we will need to do is combine them, to use the right technology for the right sub problem and then integrate them into a larger system that works as a whole.”

The complexity wall emerges

The industry has already encountered a new kind of constraint, one that is less visible than power consumption or hardware availability, but potentially more limiting. It is not a physical barrier, but a conceptual one. “The biggest challenge we see today is complexity,” Schulz says. “Once you start combining different technologies, each with its own architecture, its own programming model, its own constraints, the system as a whole becomes extremely difficult to manage.”

This complexity is not theoretical. It is already visible in today’s hybrid systems, where CPUs and GPUs must be orchestrated across distributed environments. Developers are required to think not only about computation, but about communication between nodes, memory hierarchies, and data movement across heterogeneous hardware.

“If you look at a modern system, even with just CPUs and GPUs, the programming model is already quite complex,” Schulz explains. “You must manage communication between nodes, within nodes, and across accelerators. Each of these environments is manageable on its own, but when you combine them, you start to see interaction effects that are difficult to control.”

As new technologies are introduced, that complexity compounds. Each additional paradigm brings its own requirements, its own abstractions, and its own limitations. The result is a system that is powerful, but increasingly fragile. “For the developer, this means that the amount of knowledge required to effectively use these systems is growing rapidly,” Schulz says. “You are no longer working with a single programming model. You are working with multiple, and you need to understand how they interact.”

This is where the idea of a “complexity wall” becomes tangible. It is not that systems cannot be built. It is that they cannot be easily used, scaled, or trusted.

AI and the hidden bottlenecks

AI is often presented as the driver of this transformation, but Schulz is careful to challenge the assumption that more compute alone will solve its limitations. The bottlenecks are not where many expect them to be. “When people focus on AI, they often focus on the models themselves,” he says. “But as you scale these models, you quickly run into constraints: data movement, memory bandwidth, energy consumption. These are the underlying technical challenges.”

The relationship between these factors is not linear. Improvements in one area expose weaknesses in another. Increasing model size increases data movement. Increasing data movement increases energy demand. Increasing energy demand introduces infrastructure constraints. “These are all interconnected problems,” Schulz continues. “If you want to scale AI, you must address all of them. Solving one in isolation will not be enough.”

This has implications for how enterprises approach AI infrastructure. Investments in compute alone may deliver diminishing returns if the underlying system cannot support the associated data and energy requirements. “There is a tendency to focus on the most visible part of the problem,” Schulz says. “But the real work is often in the layers below, in the architecture of the system, in how data is moved, how memory is managed, how energy is consumed.”

Heterogeneity as the new normal

If the future of computing is defined by multiple architectures working together, the question becomes how to design systems that can accommodate that diversity without collapsing under their own complexity. “We are still in the early stages of understanding what these systems will look like,” Schulz says. “Even with GPUs, the way they are integrated into systems is still evolving. Some are tightly coupled with CPUs, some are separate, some use different interconnects. There is no single solution yet.”

This uncertainty extends to emerging technologies. Quantum systems, neuromorphic chips, and photonic processors are often deployed as separate units, connected to traditional systems rather than integrated into them. “These technologies are still relatively isolated,” Schulz explains. “They are physically separate, they have different requirements, and we are still exploring how best to connect them.”

The challenge is fundamentally architectural. Decisions about how to integrate these components will shape the performance, scalability, and usability of future systems. “This is an active area of research,” Schulz says. “We are trying to find the right balance, how closely these technologies should be integrated, how they should communicate, how workloads should be distributed across them.”

The outcome is unlikely to be a single, universal architecture. Instead, it will be a spectrum of configurations, each optimised for different types of workloads.

Solving scale while increasing risk

The promise of heterogeneous computing is that it can unlock new levels of performance, enabling problems to be solved that were previously out of reach. The risk is that it introduces new layers of complexity and uncertainty. “We will solve the scaling problem for certain classes of problems,” Schulz says. “But at the same time, we will introduce new complexity and new risks.”

These risks are not limited to technical challenges. They extend to planning, investment, and long-term strategy. Instead of managing a single technology roadmap, organisations must now navigate multiple, interdependent ones. “You are no longer dealing with one technology that evolves over time,” Schulz explains. “You are dealing with multiple technologies that need to evolve together, that need to align with each other.”

This creates new forms of dependency. Delays or limitations in one area can impact the viability of others. The system becomes more powerful, but also more sensitive. “This is something we have seen before,” Schulz says. “Over time, systems have become more complex as we have tried to extract more performance. What we are seeing now is a continuation of that trend, but at a much larger scale.”

The software question

If hardware is fragmenting, software must become the unifying layer. Without it, the system becomes unusable. “The software stack will be absolutely critical,” Schulz says. “Right now, each technology is developing its own stack. Classical systems are relatively mature, but newer technologies like quantum and neuromorphic computing are still very specialised.”

The danger is that developers are left to bridge these systems themselves, stitching together incompatible tools and frameworks. “That is not sustainable,” Schulz continues. “We cannot expect developers to manage multiple independent stacks and integrate them manually. The integration must happen at a higher level.”

The solution is not simply standardisation, but compatibility. Different stacks must be able to communicate, to share data, and to operate within a unified framework. “We need software stacks that work together, that are compatible, that reduce the complexity rather than adding to it,” Schulz says. “This is essential if we want to make these systems usable at scale.”

This is where much of the real innovation will occur. Hardware may define what is possible, but software will determine what is practical.

Quantum moves closer to reality

Among the emerging technologies, quantum computing remains the most conceptually challenging. Its potential is widely discussed, but its practical deployment remains uncertain. “We have made significant progress,” Schulz says. “We have moved from purely experimental systems to something that can be used at a small scale. The industry is advancing quickly.”

Roadmaps suggest that more capable systems could emerge in the early 2030s, but even then, their role will be specialised. “Quantum computing will not replace classical systems,” Schulz explains. “It will complement them, solving specific types of problems that are difficult or impossible with traditional approaches.”

The integration challenge is therefore unavoidable. Quantum systems must work alongside existing infrastructure, not in isolation. “There is a perception that quantum computing will require entirely new data centres,” Schulz says. “In reality, we have shown that it can be integrated into existing environments, with some adjustments.”

At the Leibniz Supercomputing Centre in Munich, as part of the Munich Quantum Valley (MQV) efforts, quantum systems are already operating alongside classical HPC infrastructure. The engineering challenges are non-trivial, but they are not insurmountable. “It takes effort to integrate these systems, but it is possible,” Schulz says. “And it is necessary, because they need to work together.”

Photonics and the expanding frontier

Photonics represents another frontier, offering the potential for highly efficient computation in specific domains. While Schulz is cautious in his assessment, he recognises its potential. “Photonics is a promising technology, particularly for certain types of operations,” he says. “It can be very efficient, especially for specific linear algebra problems.”

As with other emerging paradigms, its role will be specialised rather than universal. Its value lies in how it can be integrated into a broader system. “It fits into the same pattern as the other technologies,” Schulz explains. “It is useful for particular problems, and it needs to be integrated with the rest of the system.”

The growing number of options creates both opportunity and complexity. System designers must make decisions not only about performance, but about which technologies to include at all. “When you design a system now, you have to ask which technologies your users need, which problems they are trying to solve, and how to incorporate the right components,” Schulz says. “It is no longer a one size fits all approach.”

Toward a unified future

The trajectory of computing is no longer defined by a single path. It is defined by convergence, by the need to bring together diverse technologies into a coherent whole.

For Schulz, the central challenge is not just technological, but conceptual. It is about making this complexity manageable. “We need to find ways to integrate these technologies in a way that is usable,” he says. “That means developing unified software stacks, reducing complexity, and making these systems accessible to a broader range of users.”

This is not a short-term problem. It is a structural shift that will shape the next decade of computing. “We are at the beginning of this transition,” Schulz says. “There is a lot of work to be done, but there is also a lot of opportunity. If we get this right, we can enable entirely new kinds of applications and discoveries.”

That is the message he will bring to the stage at ISC High Performance, where the industry gathers to define its next steps. His keynote will not offer a single solution, but a framework for understanding the scale of the challenge and the direction of travel. “What I want to highlight is the shift we are seeing, the move towards a much more complex, heterogeneous world, and the importance of addressing that complexity,” Schulz says. “Because if we can manage it, the potential is enormous.”

As AI continues to push against the limits of current systems, that potential will be tested. The question is no longer how to make computing faster, but how to make it fundamentally different.

ISC High Performance brings together the global HPC community to explore exactly these questions, from emerging architectures to real world deployment challenges. Illuminaire is a media partner for the event, and further details on the programme and keynote sessions can be found at https://isc-hpc.com/.