Artificial intelligence is no longer being built as software, it is being industrialised as output. The shift from models to tokens marks the moment AI becomes an economic system rather than a technological one.

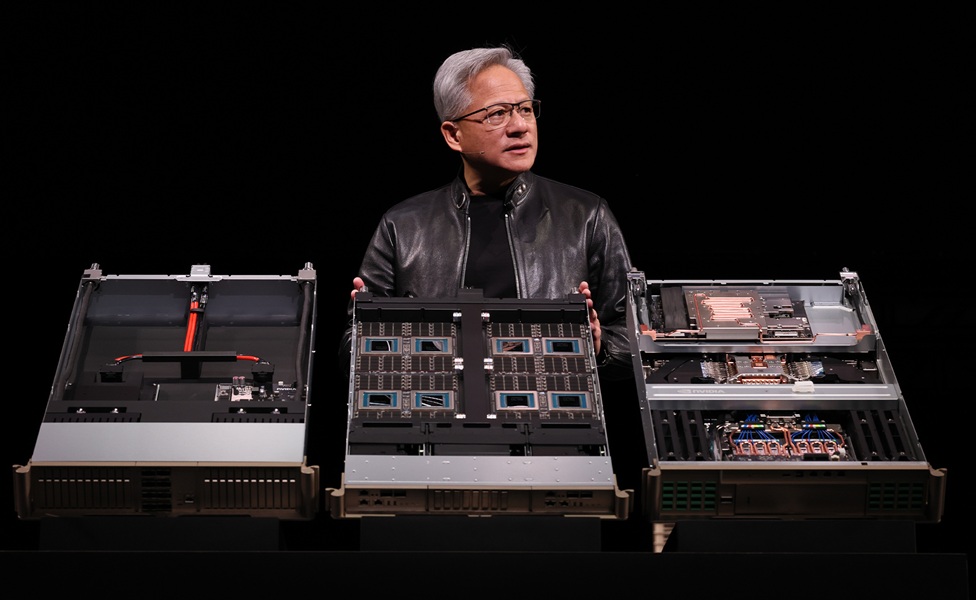

For more than a decade, artificial intelligence has been framed as a race, first to build better models, then to scale them, and more recently to apply them across industries. That framing no longer holds. What Jensen Huang, NVIDIA CEO, set out in his keynote at GTC in San Jose, was something far more structural, a redefinition of what AI is, not a tool, not a capability, but a system of production where intelligence itself is generated, measured, and monetised.

“Intelligence has made a new kind of factory, a generator of tokens, the building blocks of AI,” he says. “Tokens have opened a new frontier, turning data into knowledge and drawing on all we have learned. Tokens are harnessing a new wave of clean energy and unlocking the secrets of the stars. In virtual worlds, they help robots learn, and in the physical world, they are forging new paths. Tokens are already there in the moments that matter, and in the miles between they never stop, they work where human hands cannot.”

That passage is expansive, almost poetic, but its meaning is precise. AI is no longer defined by what it can do, but by what it produces, and the unit of that production is the token. Once that shift is made, the rest of the keynote falls into place, because every decision, every architecture, every investment is oriented around maximising the generation of that output.

“This is your token factory,” he continues. “This is your AI factory. This is your revenues. Every CEO in the world will study their business from now on in the way I am about to describe, because this is your token factory, this is your AI factory, this is your revenues. What you do this year will show up precisely next year as your revenues.”

The repetition is deliberate, and it lands with weight. Infrastructure is no longer an enabler of value; it is the mechanism through which value is created. The data centre is no longer a passive environment, it is an active production system, and the performance of that system is directly tied to financial outcomes.

The emergence of a new economic unit

The introduction of tokens as the primary unit of value is not a technical detail, it is an economic shift that redefines how AI is understood and deployed. Huang does not describe tokens as part of the model, he describes them as the product, something that can be priced, segmented, and optimised in the same way as any other industrial output.

“Tokens are the new commodity, and like all commodities, once it reaches an inflection, once it becomes mature, it will segment into different parts,” Huang continues. “The high throughput, low speed could be used for the free tier. The next tier could be the medium tier, larger model, higher speed, larger input context length that translates to a different price point. The higher the tier, the higher the quality, the higher the performance, the lower the volume.”

This is not software pricing; it is market design. Intelligence is being broken down into tiers, each defined by performance characteristics and each carrying a different economic value. The implications are immediate, because organisations are no longer choosing whether to use AI, they are choosing what level of intelligence they are willing to produce and pay for.

“Suppose you were to use 50 million tokens per day as a researcher at $150 per million tokens,” Huang muses. “As it turns out, as a research team, that is not even a thing. We believe this is the future. This is where AI wants to go. This is where it is today, and in the future, you are going to see most services encompass all of that.”

The scale embedded in that example is telling, because it highlights how quickly intelligence moves from novelty to necessity once it becomes measurable. Tokens are not just outputs, they are inputs into further reasoning, further generation, further production, creating a system where demand compounds on itself.

That compounding demand introduces a constraint that Huang returns to repeatedly, grounding the conversation in physical reality rather than abstract scale.

“Every data centre, every single factory, by definition, is power constrained,” he explains. “A one gigawatt factory will never become two. It is physically constrained by the laws of atoms, the laws of physicality, and so that one gigawatt data centre you want to drive the maximum number of tokens, which is the production, the product of that factory.”

This is the moment where the narrative tightens, because it introduces a limit that cannot be engineered away. Intelligence may be virtual, but its production is bound by energy, space, and infrastructure, forcing a level of optimisation that transforms how systems are designed.

From computing to industrial systems

If tokens are the product and power is the constraint, then the data centre becomes something fundamentally different from what it has been before. Huang describes this transformation not as an evolution but as a re-architecture of computing itself, driven by the need to maximise output within fixed limits.

“Moore’s Law has run out of steam,” he says. “We need a new approach. Accelerated computing allows us to take these giant leaps forward, and because we continue to optimise the algorithms and because our reach is so large, we can reduce the computing cost, increasing the scale, increasing the speed for everybody continuously.”

The emphasis here is not just on performance, but on the relationship between performance and cost, because the viability of the token economy depends on both. Producing more tokens is only valuable if they can be produced efficiently, and Huang’s argument is that this efficiency is achieved through the co-design of hardware, software, and algorithms as a single system.

“In one generation, nobody would have expected 35 times higher,” Huang says. “Moore’s Law would have given us one and a half times more performance. Nobody would have expected 35 times higher, and in fact it is closer to 50 times. Our cost per token is the lowest in the world, and if you have the wrong architecture, even if it is free, it is not cheap enough.”

The statement is striking because it collapses the distinction between cost and architecture, suggesting that efficiency is not a by-product of design but its primary objective. The system is not built to run workloads, it is built to produce tokens as efficiently as possible, and every component is optimised towards that goal.

That optimisation extends beyond individual systems to the broader structure of computing itself, as Huang describes a shift from general-purpose infrastructure to specialised environments designed around production. “We have now set the computing platform, but to activate those computing platforms we need domain-specific libraries that solve very important problems in each one of the verticals that we address,” Huang adds. “We are an algorithm company. That is what makes it possible to go into every single one of these industries, imagine the future, and turn it into something that can be executed at scale.”

This is where the industrial analogy becomes unavoidable, because the system is no longer generic, it is specialised, optimised, and integrated, designed to produce specific outputs at scale.

The moment AI becomes an industry

The final shift in Huang’s narrative is the move from technology to industry, where AI is no longer a layer within existing systems but the foundation of a new economic structure. The scale of that shift is captured in his assessment of demand, which he frames not as growth but as an explosion.

“The amount of computation in the last two years has increased by roughly 10,000 times,” he says. “The amount of usage has gone up by 100 times. When I combine these two, I believe that computing demand has increased by one million times in the last two years. It is the feeling that every startup has, every AI lab has, that if they could just get more capacity, they could generate more tokens, their revenues would go up, more people could use it, and the AI could become smarter.”

This is not incremental adoption, it is systemic transformation, and it explains why the shift to tokens matters. Once intelligence becomes something that can be produced, demand is no longer tied to specific applications, it becomes universal, embedded in every process, every system, every interaction.

“There is no question; this is not a one app technology,” Huang explains. “This is now fundamental. This is absolutely a new computing platform shift.” That statement closes the loop, because it positions the token economy not as a feature of AI but as its defining characteristic. The model is no longer the centre of gravity, and the machine is no longer the product. What matters is the ability to produce intelligence, continuously, efficiently, and at scale.

The consequence is a competitive landscape defined not by who has access to AI, but by who can manufacture it most effectively. In that landscape, the token is not just a technical construct, it is the unit of value, the measure of performance, and ultimately the currency of the new computing economy.