Artificial intelligence is reshaping the audio landscape, not by replacing musicians but by becoming a powerful creative partner. Mark Venables explores how AI is transforming music production, restoration and collaboration, unlocking new tools for innovation while raising important questions about authorship, authenticity and scale.

The history of music technology is full of breakthroughs that were first feared, then gradually accepted, before becoming indispensable. Artificial intelligence (AI) is following a similar trajectory, but the stakes feel higher this time. AI is not just changing how music is made; it is changing what music can be.

AI is already embedded in modern audio workflows, from mastering tools and source separation to voice cloning and intelligent Digital Audio Workstations (DAWs). The challenge now is understanding how it fits, both technically and culturally, economically, and creatively. As AI blurs the boundary between tool and collaborator, a new relationship is emerging between artists and algorithms.

Shifting the production landscape

One of the most mature applications of AI in music is mastering. It was the first place AI could demonstrate value without disrupting the creative core of music making. “Our company was the first to productise AI-based mastering at scale in the music space,” Daniel Rowland, Head of Strategy and Partnerships at LANDR, says. “Initially, we started with AI-driven mixing in partnership with Allen & Heath but pivoted into mastering because it was a more contained and solvable problem with fewer variables.”

Mastering has become a proving ground. Over time, fidelity has improved, user control has expanded, and the results are now competitive with human engineers. Yet even this relatively ‘safe’ area is evolving fast, with AI-powered mixing tools gaining ground. Rowland highlighted the emergence of intelligent plugins and new sound design applications driven by companies like ElevenLabs.

The same shift is occurring in audio restoration. Music producer and hardware expert Dave Tozer recently used AI while working on a 20th-anniversary re-release of John Legend’s ‘Get Lifted’. “We only had a demo from that time – no stems, no multitrack,” he says. “I used AI to isolate the vocal from that two-track demo and placed it over the existing master session. That version now illustrates the song’s evolution. Without stem-splitting AI, this would have been impossible.”

That ability to isolate, extract, and repurpose is now a central feature of modern production. And it is not limited to restoration. Jessica Powell, CEO and Co-Founder of AudioShake, explained how stem separation enables everything from remixing and Dolby Atmos preparation to speech disentanglement and ADR in film. “In music, if you only have a stereo mix, you cannot prepare it for Atmos or licensing opportunities,” she says. “Our technology changes that.”

Reimagining what is possible

While separation and restoration have immediate commercial applications, more radical possibilities emerge when AI takes on generative or interpretive roles. Rafael Valle, Research Scientist and Manager at NVIDIA, sees a broader shift underway. “We are in the middle of a paradigm shift,” he explains. “Instead of building AI for specific tasks, we build models that understand general instructions. It is like talking to a musician.”

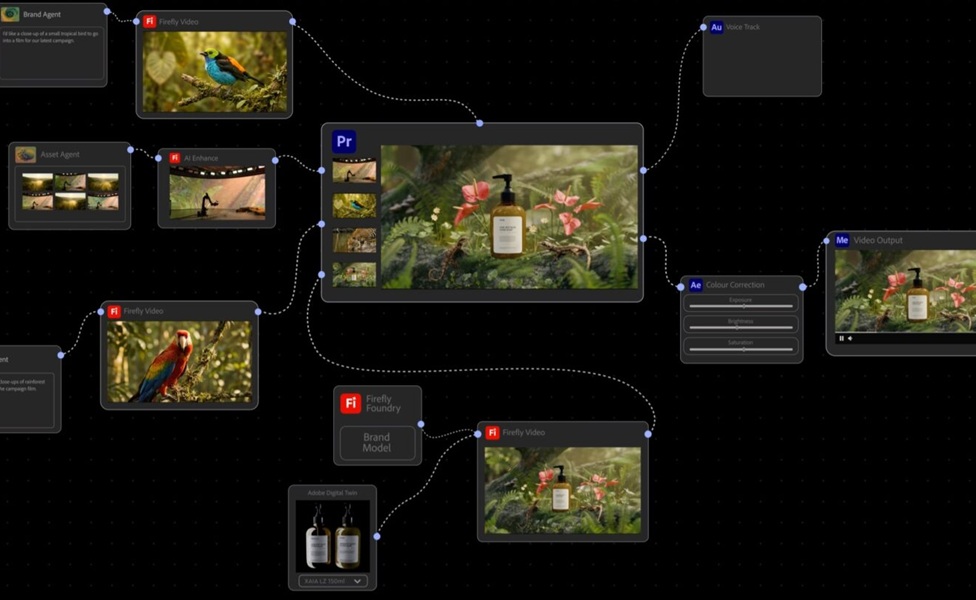

This shift from narrow tools to context-aware systems is not theoretical. It is already happening inside DAWs, where AI is starting to act as an assistant, guide, and creative partner. “They can help users troubleshoot or explain how to achieve a particular effect in context,” Rowland adds. “It points towards a future where DAWs are far more responsive and intuitive.”

Creative misuse is also proving fertile ground. Kord Taylor, consultant and fractional executive at Syntropique, described how some producers deliberately feed distorted samples or noisy inputs into AI tools to generate glitched, unexpected textures. For Rowland, this echoes the ethos of experimental artists like Adrian Belew or Brian Eno. “Curation becomes creation,” he says. “AI can push you outside your creative patterns and help you find something new. It is not just a tool; it is a collaborator.”

Powell agrees with this sentiment: “The early days of GANs were like a psychedelic dream – twenty-fingered hands and impossible landscapes. It gave you new thinking methods, even if you did not use the output directly. I still find that kind of creative friction exciting.”

Unlocking value in forgotten catalogues

Some of the most compelling applications of AI are not futuristic; they are archival. Music labels, studios, and even government archives hold vast catalogues of recordings made before multitrack recording became standard. These tracks are hard to rework, reissue, or monetise without stems. AudioShake’s tools have changed that.

“Jessica’s team has become the industry standard for stem separation,” Rowland explains. “We can now return to Jackson 5 masters or other legacy recordings that never had isolated stems and prepare them for modern platforms. This would have been impossible without AI.”

Taylor added that these tools are equally relevant for pre-war blues, folk, and early jazz recordings held by institutions like the Library of Congress. “There are no stems,” he adds. “Audioshake’s tools are the only way to separate and enhance those recordings.” And it goes further. Powell pointed out that very old recordings need more than just separation. “Even after stem separation, you need up-res tools to clean and enhance the audio,” she says. “So it is not just about separation, it is about building a full pipeline of AI tools that work together to rescue and reimagine historic recordings.”

Rights, royalties and revenue models

AI is also forcing a rethink of music’s economic infrastructure. From sync licensing to streaming fraud detection, there is a growing list of practical problems that AI is now solving. Powell highlighted how 30 to 50 per cent of licensing opportunities were historically lost because labels lacked access to clean stems. “AI changed that,” she explains. “Now catalogues previously unusable for sync or Dolby Atmos can be unlocked. That is real, immediate revenue.”

Rowland sees AI’s role in lyric transcription as similarly practical. “Labels often receive lyrics from artists that no longer match the final recorded track,” he adds. “Someone must rewrite them, often an intern. If a platform wants karaoke-style timed lyrics, aligning every word to the audio is painful. AI solves that instantly.”

Remix detection and voice licensing are also being transformed. Tozer explained how companies are allowing singers to upload their voices as models. “Users like me can generate a beautiful vocal from a scratch demo or even MIDI,” he says. “The singer earns royalties every time their voice is used. That is a whole new model for collaboration.”

LANDR, meanwhile, has introduced a system where users earn recurring revenue if their recordings are used to train AI models. “More than they might earn on Spotify,” Rowland adds. “It is a new way for artists to share the value created.”

The cultural dimension of adoption

Despite the progress, resistance remains. The music industry is notoriously slow to embrace new tools, especially when those tools challenge established workflows or perceived authenticity. “There is still a lot of distrust in the music world around AI,” Rowland says. “We idolise the past. With vinyl and tape machines, we cling to the old ways. And AI has introduced an extra layer of suspicion.”

Tozer acknowledged this unease: “As a human, I do instinctively want human-created music to be recognised differently,” he continues. “But if I play guitar on one track and use a synth on another, I do not label them differently. So I am not sure where the line is.” Powell believes this debate often misses the point. “We are fixated on whether something is 80 per cent AI or 20 per cent AI,” he says. “But that will fade. The real concern is impact. AI allows for enormous scale, and that scale demands a different kind of scrutiny.”

Rowland points to streaming platforms already receiving 100,000 tracks per day. AI could multiply that tenfold. “Spotify now requires 1,000 streams before they pay out to discourage mass-uploaded AI content,” he says. “We are working with partners to analyse uploaded tracks and determine what percentage of the audio was AI-generated. It is essential to have these checks in place.”

Valle echoed this concern. “Yes, AI can generate millions of tracks daily,” he adds. “But do we need all of them? Without curation and emotional connection, those tracks are just noise.”

“Music is not just sound; it is identity. It is context. It is memory. There is an emotional resonance that cannot be faked,” Taylor says. “If I hear a Duran Duran track, I remember being young. A machine cannot replicate that kind of human connection.”

Looking to the future

For all its power, AI is unlikely to replace musicians. But it will change who can participate, how they work, and what they can create. Valle described his hope of writing a score at home and having a model embody the skills of a conductor or a virtuoso instrumentalist. “This disembodiment of talent, where an artist’s skill can be present and available everywhere at once, is incredibly powerful. But it requires partnerships between musicians and technologists,” he continues.

That collaboration is already underway. As Rowland notes, many of the most effective AI tools in music no longer advertise themselves as such. “It will not be branded as AI,” he explains. “It will just work better. You do not have to use it, but you should understand it. Because it will reshape the landscape.”

Powell puts it plainly. “AI did not create the industry’s biggest problems, but it may finally help us address them.” However, Tozer sees a more intuitive, creative future. “If I want to test multiple grooves under a song to see what fits, AI will help me do that instantly. If I want to learn why a snare on an Amy Winehouse record sounds like it does, AI might break it down and show me. These tools will not just do things for you; they will teach you.”

Perhaps that is the real shift, not just from analogue to digital or human to machine but from static tools to dynamic collaborators. In music, as in many other industries, AI is changing the medium. But it is also changing the message.