AI is moving out of controlled environments and into systems that cannot fail safely. The consequence is that data infrastructure is no longer a supporting layer, it becomes the system’s ability to function at all.

Physical AI does not degrade quietly when something goes wrong. It fails in ways that are immediate, visible, and operationally disruptive, exposing weaknesses that were previously hidden behind abstraction layers. The tolerance for failure disappears because failure is no longer contained within software, it manifests in the physical world where systems are expected to run continuously.

“When a chatbot fails, a session resets,” Melody Zacharias, Sr. Microsoft Solutions Manager at Everpure, explains in her recent blog. “When physical AI fails, production lines halt, autonomous vehicles miss timing windows, robotic systems lose calibration, and safety systems misfire. The stakes are fundamentally different, and that changes how we have to think about the systems underneath it.

“Physical AI is not just generative AI with motors attached. It is latency sensitive, often operating in sub-10 millisecond inference loops. It is stateful, meaning models are continuously retrained and updated. It is safety critical, audit bound and deeply integrated across IT and operational technology environments.”

Once you connect models directly to machines, you are no longer dealing with isolated systems. You are dealing with a closed loop that never stops, and that loop defines the performance, safety, and reliability of the entire operation.

That closed loop is where the scale of the challenge becomes apparent. Systems that were once loosely connected now operate as a continuous pipeline, where ingestion, training, inference, and action occur simultaneously. The system is only as strong as its weakest link, and increasingly that link is not the model.

“In modern smart factories and autonomous environments, you are looking at ten to fifty terabytes of telemetry per day,” Zacharias adds. “You are managing hundreds of millions of small files during training, and checkpointing that can reach tens of gigabytes every hour. This is not batch analytics, it is continuous infrastructure, and it behaves very differently under load.

“If ingestion slows, inference quality degrades. If checkpoints are corrupted, retraining restarts. If throughput drops, GPUs sit idle. At that point, infrastructure is no longer supporting the system, it is determining whether the system can operate at all.”

Data gravity and deployment friction

The shift from experimentation to production exposes a constraint that is structural rather than technical. Most AI systems are designed and validated in cloud environments, where data is centralised and compute is elastic. That model breaks down as soon as AI is embedded into physical systems.

“Most enterprise AI projects begin in the cloud,” Zacharias continues. “Teams spin up GPU clusters, prototype quickly, and build initial models. But physical AI cannot remain in the cloud. The moment it connects to manufacturing telemetry, clinical systems, or robotics, the model has to move closer to where the data is generated.

“That is where the friction begins. Petabytes of training data must migrate, checkpoint integrity must be preserved, governance controls must remain intact, and data sovereignty requirements cannot be violated. Meanwhile, GPU clusters are waiting for data movement, and utilisation drops.

“This is not a model problem. It is a data gravity and portability problem. Without consistent data services across environments, AI innovation slows down at the exact point where it needs to scale. The implication is that compute is no longer the primary constraint. Organisations that have invested heavily in GPU infrastructure are finding that performance is limited by how effectively data can be moved and managed across environments.

“The challenge is no longer access to compute,” Zacharias says. “It is how effectively data can be orchestrated, moved, and governed across hybrid environments. If that layer is inconsistent, every transition introduces friction, and that friction compounds over time.”

The rise of the AI data factory

The response to this challenge is not incremental optimisation, but a redefinition of architecture. The centre of gravity shifts away from models and towards data, with storage becoming a core component of system design rather than a supporting layer.

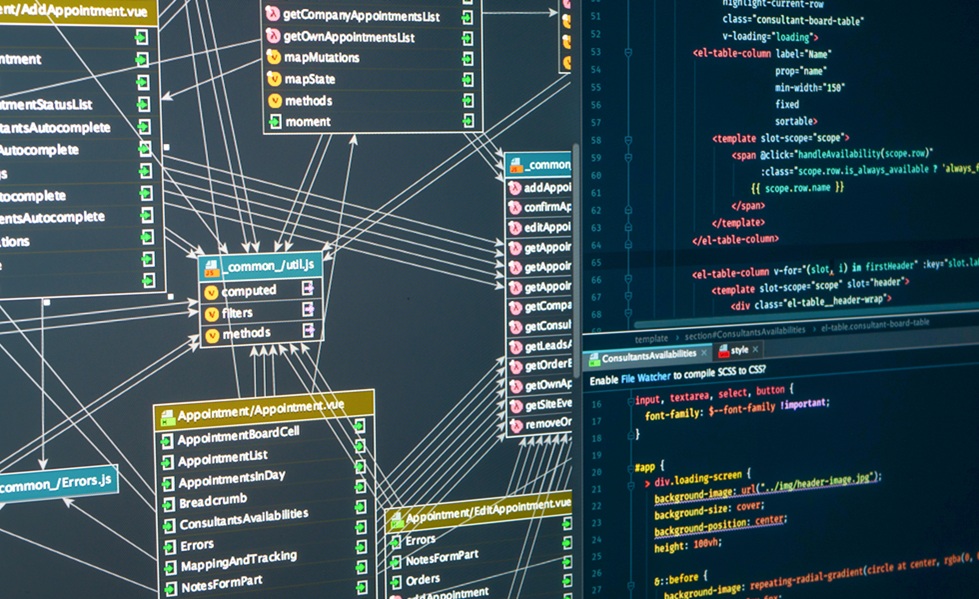

“The industry has talked about the AI factory as an integrated system for ingestion, training, and inference,” Zacharias explains. “For physical AI, that architecture has to extend further into what we call an AI data factory, where data itself becomes the control plane.

“That means building a storage-centric architecture that can sustain high-frequency ingestion, support hundreds of gigabytes per second of throughput, and guarantee checkpoint integrity through immutability and instant rollback. It also means embedding governance and identity directly into the data layer rather than treating them as external controls.”

If you cannot guarantee deterministic latency, the inference loops break. If you cannot guarantee checkpoint integrity, the models become unreliable. If you cannot enforce identity at the data layer, the security model collapses. These are not edge cases, they are fundamental requirements.

This architecture must also operate consistently across cloud, on-premises, and edge environments. The expectation is no longer that systems can be reconfigured at each stage, but that they maintain continuity as they move closer to where decisions are executed.

“Physical AI is inherently hybrid,” Zacharias adds. “You are operating across public cloud GPU environments, on-prem clusters, and edge systems embedded in operational environments. If your data services differ across those domains, re-architecture becomes inevitable, and that is where progress slows.

“You need policy frameworks that follow workloads rather than forcing governance teams to rebuild controls in each environment. That is what allows data scientists to keep iterating, GPUs to remain saturated, and operational systems to remain stable.”

Security and identity at machine scale

The expansion of AI into physical systems introduces a different kind of security challenge. The number of interacting entities increases dramatically, and most of them are not human. Traditional identity models struggle to scale in this environment.

In many deployments, non-human identities outnumber humans by fifty to one. These include robots, sensors, APIs, autonomous agents, and simulation environments. Traditional identity and access models were designed for users, not for this scale of machine interaction.

“That means identity has to move closer to the data,” Zacharias adds. “You need machine identity frameworks, least-privilege enforcement at the storage layer, and segmentation that operates east-west across the system. Zero trust cannot stop at the network; it must extend into the data itself.

Without that, the system becomes fragile. You cannot guarantee that data has not been altered, and you cannot guarantee that the system will behave as expected under pressure.”

At scale, these requirements converge with performance considerations. The same infrastructure that governs access must also sustain throughput, and any weakness in one dimension affects the other.

“When you reach thousands of GPUs, storage throughput becomes the gating factor,” Zacharias continues. “If GPUs are waiting for I/O, capital efficiency collapses. Compute and storage must operate as a single engineered system, not as loosely coupled tiers.”

The hidden risk in core systems

The same structural weaknesses are visible in the systems that underpin everyday business operations. The difference is that they have been tolerated for longer, often misdiagnosed as application-level issues rather than infrastructure constraints.

When an airline check-in stalls, a retailer’s cart spins, or a financial close is delayed, the root cause often traces back to the database engine. These systems are the critical process that feeds every downstream operation, and when they lag, the entire business feels it.

“Many of these environments are still running on legacy storage systems that were designed for a different era,” Zacharias explains. “As data volumes grow and workloads diversify, those systems require more manual intervention, lack resiliency, and introduce risk that extends beyond IT.

“The ripple effects are measurable. Downtime leads to lost revenue, missed service level agreements, and reputational damage that outlasts the outage. These are no longer hypothetical scenarios; they are costs that organisations review every quarter.”

What makes this more complex is that the symptoms are often attributed elsewhere. Performance issues are blamed on applications or databases, while the underlying constraint remains unaddressed. “Slow queries, missed batch windows, and frozen dashboards are often diagnosed as application problems,” Zacharias notes. “In many cases, the root cause is an overtaxed and outdated storage layer that cannot sustain modern workloads.”

Infrastructure as a strategic decision

The shift underway is not purely technical. It changes how organisations think about risk, investment, and competitive advantage. Infrastructure decisions are becoming directly tied to business outcomes, rather than confined to operational efficiency.

“Board members do not vote on storage architectures, but they do approve investments tied to revenue protection, customer experience, and operational resilience,” Zacharias says. “Storage now intersects with all of those priorities.

“When infrastructure is no longer a bottleneck, the effects are immediate. Customer interactions improve, financial processes accelerate, and development cycles shorten. These are operational changes that translate directly into competitive advantage. The organisations that succeed in this next phase will not be the ones with the largest models. They will be the ones that build data infrastructures capable of sustaining real-world operations at scale.”

That distinction reframes the conversation around AI. The focus shifts away from what models can do, and towards whether the systems beneath them can support those capabilities consistently and safely.

“When AI moves into the physical world, there is no separation between software and infrastructure,” Zacharias concludes. “The system either works continuously, or it fails visibly. At that point, storage is not a background concern, it becomes the backbone of trust.”